The Fragmented World of AI Agents and the Path to True Interoperability

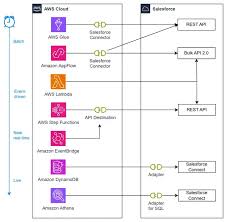

The Island of Agents Problem Modern enterprises increasingly find themselves managing multiple specialized AI agents – CRM agents handling customer interactions, data warehouse agents processing analytics, knowledge bots retrieving documents. Yet these agents operate in isolation, creating what industry experts call “the island of agents” phenomenon. Rather than forming an intelligent, collaborative ecosystem, these disconnected AI systems create silos of limited functionality. Emerging Standards for Agent Collaboration The technology sector has recognized this challenge. Google recently introduced Agent2Agent (A2A), an open protocol enabling cross-platform agent communication. Concurrently, Anthropic launched the Model Context Protocol (MCP), which standardizes how agents access tools and contextual information. Salesforce launched Salesforce MCP, an open standard that enables AI agnets to securely connect with and access data from various systems. These complementary standards address both cognitive (how agents think) and communicative (how agents interact) aspects of AI interoperability. MCPs act as a bridge between AI agents and different systems by defining a commn protocol for communication. Salesforce uses MCP within its Agentforce platform to enable AI agents to interact with Salesforce data and functionality, like querying records, retrieving metadata, and managing objects However, these protocols currently rely on traditional web communication patterns – HTTP, JSON-RPC, and Server-Sent Events (SSE) – which work adequately for simple interactions but show limitations as agent ecosystems scale. Industry observers note that what’s missing is a robust, event-driven communication layer capable of supporting complex enterprise deployments. A2A: The Potential HTTP for AI Agents Drawing parallels to the early internet’s fragmentation before HTTP standardization, A2A aims to become the universal communication protocol for AI agents. The protocol enables three key functions: Built on existing web standards, A2A maintains developer familiarity while solving fundamental interoperability challenges. When combined with Anthropic’s MCP for tool standardization, these protocols form a comprehensive framework for agent collaboration. Scalability Challenges in Point-to-Point Architectures While A2A solves the language problem, its current point-to-point communication model presents scalability issues: These limitations mirror those experienced during early microservices adoption, suggesting similar architectural evolution may benefit agent ecosystems. Event-Driven Architecture: The Kafka Solution The technology community increasingly views event streaming platforms like Apache Kafka as the missing component for scalable agent collaboration. By implementing A2A over Kafka, enterprises could achieve: Three potential implementation patterns emerge: Toward a Unified Agent Ecosystem The combination of standardized protocols and event-driven architecture promises to transform disconnected agent collections into true collaborative systems. This evolution mirrors the internet’s transition from isolated networks to a globally connected web. As enterprises increasingly deploy diverse AI agents, those adopting both A2A/MCP standards and event-driven infrastructure will likely gain significant competitive advantages in: Industry analysts suggest this combined approach may become the de facto standard for enterprise AI ecosystems within the next 2-3 years, fundamentally changing how organizations deploy and benefit from artificial intelligence. Like Related Posts Who is Salesforce? Who is Salesforce? Here is their story in their own words. From our inception, we’ve proudly embraced the identity of Read more Salesforce Marketing Cloud Transactional Emails Salesforce Marketing Cloud Transactional Emails are immediate, automated, non-promotional messages crucial to business operations and customer satisfaction, such as order Read more Salesforce Unites Einstein Analytics with Financial CRM Salesforce has unveiled a comprehensive analytics solution tailored for wealth managers, home office professionals, and retail bankers, merging its Financial Read more AI-Driven Propensity Scores AI plays a crucial role in propensity score estimation as it can discern underlying patterns between treatments and confounding variables Read more