From AI Workflows to Autonomous Agents

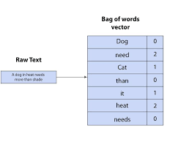

From AI Workflows to Autonomous Agents: The Path to True AI Autonomy Building functional AI agents is often portrayed as a straightforward task—chain a large language model (LLM) to some APIs, add memory, and declare autonomy. Yet, anyone who has deployed such systems in production knows the reality: agents that perform well in controlled demos often falter in the real world, making poor decisions, entering infinite loops, or failing entirely when faced with unanticipated scenarios. AI Workflows vs. AI Agents: Key Differences The distinction between workflows and agents, as highlighted by Anthropic and LangGraph, is critical. Workflows dominate because they work reliably. But to achieve true agentic AI, the field must overcome fundamental challenges in reasoning, adaptability, and robustness. The Evolution of AI Workflows 1. Prompt Chaining: Structured but Fragile Breaking tasks into sequential subtasks improves accuracy by enforcing step-by-step validation. However, this approach introduces latency, cascading failures, and sometimes leads to verbose but incorrect reasoning. 2. Routing Frameworks: Efficiency with Blind Spots Directing tasks to specialized models (e.g., math to a math-optimized LLM) enhances efficiency. Yet, LLMs struggle with self-assessment—they often attempt tasks beyond their capabilities, leading to confident but incorrect outputs. 3. Parallel Processing: Speed at the Cost of Coherence Running multiple subtasks simultaneously speeds up workflows, but merging conflicting results remains a challenge. Without robust synthesis mechanisms, parallelization can produce inconsistent or nonsensical outputs. 4. Orchestrator-Worker Models: Flexibility Within Limits A central orchestrator delegates tasks to specialized components, enabling scalable multi-step problem-solving. However, the system remains bound by predefined logic—true adaptability is still missing. 5. Evaluator-Optimizer Loops: Limited by Feedback Quality These loops refine performance based on evaluator feedback. But if the evaluation metric is flawed, optimization merely entrenches errors rather than correcting them. The Four Pillars of True Autonomous Agents For AI to move beyond workflows and achieve genuine autonomy, four critical challenges must be addressed: 1. Self-Awareness Current agents lack the ability to recognize uncertainty, reassess faulty reasoning, or know when to halt execution. A functional agent must self-monitor and adapt in real-time to avoid compounding errors. 2. Explainability Workflows are debuggable because each step is predefined. Autonomous agents, however, require transparent decision-making—they should justify their reasoning at every stage, enabling developers to diagnose and correct failures. 3. Security Granting agents API access introduces risks beyond content moderation. True agent security requires architectural safeguards that prevent harmful or unintended actions before execution. 4. Scalability While workflows scale predictably, autonomous agents become unstable as complexity grows. Solving this demands more than bigger models—it requires agents that handle novel scenarios without breaking. The Road Ahead: Beyond the Hype Today’s “AI agents” are largely advanced workflows masquerading as autonomous systems. Real progress won’t come from larger LLMs or longer context windows, but from agents that can:✔ Detect and correct their own mistakes✔ Explain their reasoning transparently✔ Operate securely in open environments✔ Scale intelligently to unforeseen challenges The shift from workflows to true agents is closer than it seems—but only if the focus remains on real decision-making, not just incremental automation improvements. Like Related Posts Salesforce OEM AppExchange Expanding its reach beyond CRM, Salesforce.com has launched a new service called AppExchange OEM Edition, aimed at non-CRM service providers. Read more The Salesforce Story In Marc Benioff’s own words How did salesforce.com grow from a start up in a rented apartment into the world’s Read more Salesforce Jigsaw Salesforce.com, a prominent figure in cloud computing, has finalized a deal to acquire Jigsaw, a wiki-style business contact database, for Read more Service Cloud with AI-Driven Intelligence Salesforce Enhances Service Cloud with AI-Driven Intelligence Engine Data science and analytics are rapidly becoming standard features in enterprise applications, Read more