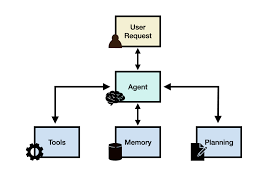

AI’s Impact on Future Information Ecosystems The proliferation of generative AI technology has ignited a renewed focus within the media industry on how to strategically adapt to its capabilities. Media professionals are now confronted with crucial questions: What are the most effective ways to leverage this technology for efficiency in news production and to enhance audience experiences? Conversely, what threats do these technological advancements pose? Is legacy media on the brink of yet another wave of disintermediation from its audiences? Additionally, how does the evolution of technology impact journalism ethics? AI’s Impact on Future Information Ecosystems. In response to these challenges, the Open Society Foundations (OSF) launched the AI in Journalism Futures project earlier this year. The first phase of this ambitious initiative involved an open call for participants to develop future-oriented scenarios that explore the potential driving forces and implications of AI within the broader media ecosystem. The project sought to answer questions about what might transpire among various stakeholders in 5, 10, or 15 years. As highlighted by Nick Diakopoulos, scenarios are a valuable method for capturing a diverse range of perspectives on complex issues. While predicting the future is not the goal, understanding a variety of plausible alternatives can significantly inform current strategic thinking. Ultimately, more than 800 individuals from approximately 70 countries contributed short scenarios for analysis. The AI in Journalism Futures project subsequently utilized these scenarios as a foundation for a workshop, which refined the ideas outlined in their report. Diakopoulos emphasizes the importance of examining this broad set of initial scenarios, which OSF graciously provided in anonymized form. This analysis specifically explores (1) the various types of impacts identified within the scenarios, (2) the associated timeframes for these impacts—whether they are short, medium, or long-term, and (3) the global differences in focus across regions, highlighting how different parts of the world emphasized distinct types of impacts. While many additional questions could be explored regarding this data—such as the drivers of impacts, final outcomes, severity, stakeholders involved, or technical capabilities emphasized—this analysis focuses primarily on impacts. Refining the Data The initial pool of 872 scenarios underwent a rigorous process of cleaning, filtering, transformation, and verification before analysis. Firstly, scenarios shorter than 50 words were excluded from consideration, resulting in 852 scenarios for analysis. Additionally, 14 scenarios that were not written in English were translated using Google Sheets. To enable geographic and temporal analysis, the country of origin for each scenario writer was mapped to their respective continents, and the free-text “timeframe” field was converted into numerical representations of years. Next, impacts were extracted from each scenario using an LLM (GPT-4 in this case). The prompts for the LLM were refined through iteration, with a clear definition established for what constitutes an “impact.” Diakopoulos defined an impact as “a significant effect, consequence, or outcome that an action, event, or other factor has in the scenario.” This definition encompasses not only the ultimate state of a scenario but also intermediate outcomes. The LLM was instructed to extract distinct impacts, with each impact represented by a one-sentence description and a short label. For instance, one impact could be described as, “The proliferation of flawed AI systems leads to a compromised information ecosystem, causing a general doubt in the reliability of all information,” labeled as “Compromised Information Ecosystem.” To ensure the accuracy of this extraction process, a random sample of five scenarios was manually reviewed to validate the extracted impacts against the established definition. All extracted impacts passed the checks, leading to confidence in scaling the analysis across the entire dataset. This process resulted in the identification of 3,445 impacts from the 852 scenarios. AI’s Impact on Future Information Ecosystems A typology of impact types was developed based on the 3,445 impact descriptions, utilizing a novel method for qualitative thematic analysis from a Stanford University study. This approach clusters input texts, synthesizes concepts that reflect abstract connections, and produces scoring definitions to assess the relevance of each original text. For example, a concept like “AI Personalization” might be defined by the question, “Does the text discuss how AI personalizes content or enhances user engagement?” Each impact description was then scored against these concepts to tabulate occurrence frequencies. Impacts of AI on Media Ecosystems Through this analytical approach, 19 impact themes emerged, along with their corresponding scoring definitions: Interestingly, many scenarios articulated themes around how AI intersects with fact-checking, trust, misinformation, ethics, labor concerns, and evolving business models. Although some concepts may not be entirely distinct, this categorization offers a meaningful overview of the key ideas represented in the data. Distribution of Impact Themes Comparing these findings with those in the OSF report reveals some discrepancies. For instance, while the report emphasizes personalization and misinformation, these themes were less prevalent in the analyzed scenarios. Moreover, themes such as the rise of AI agents and audience fragmentation were mentioned but did not cluster significantly in the analysis. To capture potentially interesting but less prevalent impacts, the clustering was rerun with a smaller minimum cluster size. This adjustment yielded hundreds more concept themes, revealing insights into longer-tail issues. Positive visions for generative AI included reduced language barriers and increased accessibility for marginalized audiences, while concerns about societal fragmentation and privacy were also raised. Impacts Over Time and Around the World The analysis also explored how the impacts varied based on the timeframe selected by writers and their geographic locations. Using a Chi-Squared test, it was determined that “AI Personalization” trends towards long-term implications, while both “AI Fact-Checking” and “AI and Misinformation” skew toward shorter-term issues. This suggests that scenario writers perceive misinformation impacts as imminent threats, likely reflecting ongoing developments in the media landscape. When examining the distribution of impacts by region, it was found that “AI Fact-Checking” was more frequently noted by writers from Africa and Asia, while “AI and Misinformation” was less prevalent in scenarios from African writers but more so in those from Asian contributors. This indicates a divergence in perspectives on AI’s role in the media ecosystem.