The Rise and Limits of GPT Models: What They Can’t Do (And What Comes Next)

GPT Models: The Engines of Modern AI

GPT models have revolutionized AI, offering speed, flexibility, and generative power that older architectures like RNNs couldn’t match. Without their development—starting with GPT-1 (2018) and BERT (2018)—today’s AI landscape, especially generative AI, wouldn’t exist.

Yet, despite their dominance, GPT models have fundamental flaws—hallucinations, reasoning gaps, and context constraints—that make them unsuitable for some critical tasks.

So, what can’t GPT models do well? Which limitations can be fixed, and which are unavoidable?

How GPT Models Work (And Why They’re Different)

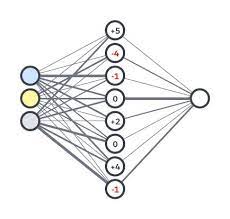

GPT models are transformer-based, meaning they process data in parallel (unlike sequential RNNs). This allows them to:

✔ Analyze entire sentences at once

✔ Generate coherent, context-aware responses

✔ Scale efficiently with more data

But this architecture also introduces key weaknesses.

The 3 Biggest Limitations of GPT Models

1. Hallucinations: When AI Makes Things Up

Why it happens:

- Limited context windows force models to “guess” when they lack enough information.

- Attention mechanisms sometimes focus on irrelevant data, leading to nonsense answers.

Can it be fixed?

- Partially. Better training data and prompt engineering help, but hallucinations are baked into transformer design.

2. Struggles with Long-Form Data

Why it happens:

- GPT models chunk large inputs (like books) and process them separately, losing coherence.

- They often miss connections between distant sections of text.

Can it be fixed?

- Not fully. Even with caching tricks, transformers weren’t built for deep document analysis.

3. They Can’t Really “Reason”

Why it happens:

- GPT models recognize patterns but don’t logically deduce answers.

- Example: “What day was August 24, 1572?”

- Humans calculate backward from known dates.

- GPTs only know if it’s in their training data (and often guess wrong).

Can it be fixed?

- No. True reasoning requires symbolic logic, not just pattern matching.

The Future: Can GPT Models Improve?

Option 1: Patch the Transformer

- Better attention mechanisms (to reduce over-focusing)

- Context caching (to help with long documents)

- Multi-step “reasoning” tricks (breaking problems into parts)

But these are band-aids, not true fixes.

Option 2: Move Beyond Transformers

New architectures are emerging:

- Megalodon – GPT-like speed without context limits

- Mamba (State-Space Models) – Efficient, scalable, and better at reasoning

- Hybrid Models – Combining transformers with symbolic AI for true logic

The Bottom Line

✅ GPT models are here to stay (for now)

❌ But they’ll never be perfect at reasoning or long-context tasks

🚀 The next AI breakthrough may come from a totally new architecture

What’s next? Keep an eye on Mamba, Megalodon, and neurosymbolic AI—they might just dethrone transformers.