Salesforce has built and maintains a fairly definitive glossary of generative Artificial Intelligence terminology, Tectonic thought was good enough to share in our insights. Salesforce Generative AI Glossary.

Help everyone in your company understand key generative AI terms, and what they mean for your customer relationships. Fun fact: This article was (partially) written using generative AI. Bookmark this!

This generative AI glossary will be updated regularly.

Does it seem like everyone around you is casually tossing around terms like “generative AI,” “large language models,” or “deep learning”? Salesforce has created a primer on everything you need to know to understand the newest, most impactful technology that’s come along in decades. Let’s dive into the world of generative AI.

Salesforce has built a list of the most essential terms that will help everyone in your company — no matter their technical background – understand the power of generative AI. Each term is defined based on how it impacts both your customers and your team.

And to highlight the real-world applications of generative AI, we put it to work for this article. Salesforce experts weighed in on the key terms, and then let a generative AI tool lay the groundwork for this glossary. Each definition needed a human touch to get it ready for publication, but it saved loads of time.

Anthropomorphism

The tendency for people to attribute human motivation, emotions, characteristics or behavior to AI systems. For example, you may think the model or output is ‘mean’ based on its answers, even though it is not capable of having emotions, or you potentially believe that AI is sentient because it is very good at mimicking human language. While it might resemble something familiar, it’s essential to remember that AI, however advanced, doesn’t possess feelings or consciousness. It’s a brilliant tool, not a human being.

- What it means for customers: On the plus side, customers might feel more connected or engaged with AI systems that exhibit human-like characteristics, leading to a more relatable and personalized experience. On the negative side, customers might become offended or upset at responses they experience as rude or uncaring.

- What it means for teams: Teams must stay aware of this concept to manage user expectations and ensure that users understand the capabilities and limitations of AI systems.

Artificial intelligence (AI)

AI is the broad concept of having machines think and act like humans. Generative AI is a specific type of AI (more on that below).

- What it means for customers: AI can help your customers by predicting what they’re likely to want next, based on what they’ve done in the past. It gives them more relevant communications and product recommendations, and can remind them of important upcoming tasks. (Like, “It’s time to reorder!”). Artificial Intelligence makes everything about their experience with your organization more helpful, personalized, efficient, and friction-free.

- What it means for teams: AI helps your teams work smarter and faster by automating routine tasks. This saves employees time, offers customers faster service, and provides more personalized interactions, all of which improves customer retention to drive the business.

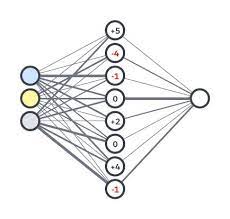

Artificial neural network (ANN)

An Artificial Neural Network (ANN) is a computer program that mimics the way human brains process information. Our brains have billions of neurons connected together, and an ANN (also referred to as a “neural network”) has lots of tiny processing units working together. Think of it like a team all working to solve the same problem. Every team member does their part, then passes their results on. In the end, you get the answer you need.

- What it means for customers: Customers benefit in all sorts of ways when ANNs are solving problems and making accurate predictions – like highly personalized recommendations that result in a more tailored, intuitive, and ultimately more satisfying customer experience. Neural networks are excellent at recognizing patterns, which makes them a key tool in detecting unusual behavior that may indicate fraud. This helps protect customers’ personal information and financial transactions.

- What it means for teams: Teams benefit, too. They can forecast customer churn, which prompts proactive ways to improve customer retention. ANNs can also help in customer segmentation, allowing for more targeted and effective marketing efforts. In a CRM system, neural networks could be used to predict customer behavior, understand customer feedback, or personalize product recommendations.

Augmented intelligence

Think of augmented intelligence as a melding of people and computers to get the best of both worlds. Computers are great at handling lots of data and doing complex calculations quickly. Humans are great at understanding context, finding connections between things even with incomplete data, and making decisions on instinct. Augmented intelligence combines these two skill sets. It’s not about computers replacing people or doing all the work for us. It’s more like hiring a really smart, well-organized assistant.

- What it means for customers: Augmented intelligence lets a computer crunch the numbers, but then humans can decide what actions to take based on that information. This leads to better service, marketing, and product recommendations for your customers.

- What it means for teams: Augmented intelligence can help you make better and more strategic decisions. For example, a CRM system could analyze customer data and suggest the best time for sales or marketing teams to reach out to a prospect, or recommend products a customer might be interested in.

Customer Relationship Management (CRM) with Generative AI

CRM is a technology that keeps customer records in one place to serve as the single source of truth for every department, which helps companies manage current and potential customer relationships. Generative AI can make CRM even more powerful — think personalized emails pre-written for sales teams, e-commerce product descriptions written based on the product name, contextual customer service ticket replies, and more.

- What it means for customers: A CRM gives customers a consistent experience across all channels of engagement, from marketing to sales to customer service and more. While customers don’t see a CRM, they feel the connection during every interaction with a brand.

- What it means for teams: A CRM helps companies stay connected to customers, streamline processes, and improve profitability. It lets your teams store customer and prospect contact information, identify sales opportunities, record service issues, and manage marketing campaigns, all in one central location. For example, it makes information about every customer interaction available to anyone who might need it. Generative AI amplifies CRM by making it faster and easier to connect to customers at scale – think marketing lead-gen campaigns automatically translated to reach your top markets across the globe, or recommended customer service responses that help agents solve problems quickly and identify opportunities for future sales.

Deep learning

Deep learning is an advanced form of AI that helps computers become really good at recognizing complex patterns in data. It mimics the way our brain works by using what’s called layered neural networks, where each layer is a pattern (like features of an animal) that then lets you make predictions based on the patterns you’ve learned before (ex: identifying new animals based on recognized features). It’s really useful for things like image recognition, speech processing, and natural-language understanding.

- What it means for customers: Deep learning-powered CRMs create opportunities for proactive engagement. They can enhance security, make customer service more efficient, and personalize experiences. For example, if you have a tradition of buying new fan gear before each football season, deep learning connected to a CRM could show you ads or marketing emails with your favorite team gear a month before the season starts so you’ll be ready on game day.

- What it means for teams: In a CRM system, deep learning can be used to predict customer behavior, understand customer feedback, and personalize product recommendations. For example, if there’s a boom in sales among a particular customer segment, a deep learning-powered CRM could recognize the pattern and recommend increasing marketing spend to reach more of that audience pool.

Discriminator (in a GAN)

In a Generative Adversarial Network (GAN), the discriminator is like a detective. When it’s shown pictures (or other data), it has to guess which are real and which are fake. The “real” pictures are from a dataset, while the “fake” ones are created by the other part of the GAN, called the generator (see generator below). The discriminator’s job is to get better at telling real from fake, while the generator tries to get better at creating fakes. This is the software version of continuously building a better mousetrap.

- What it means for customers: Discriminators in GANs are an important part of fraud detection. Using them leads to a more secure customer experience.

- What it means for teams: Discriminators in GANs helps your team evaluate the quality of synthetic data or content and aid in fraud detection and personalized marketing.

Ethical AI maturity model

An Ethical AI maturity model is a framework that helps organizations assess and enhance their ethical practices in using AI technologies. It maps out the ways organizations can evaluate their current ethical AI practices, then progress toward more responsible and trustworthy AI usage. It covers issues related to transparency, fairness, data privacy, accountability, and bias in predictions.

- What it means for customers: Having an ethical AI model in place, and being open about how you use AI, helps build trust and assures your customers that you are using their data in responsible ways.

- What it means for teams: Regularly evaluating your AI practices and staying transparent about how you use AI can help you stay aligned to your company’s ethical considerations and societal values.

Explainable AI (XAI)

Remember being asked to show your work in math class? That’s what we’re asking AI to do. Explainable AI (XAI) should provide insight into what influenced the AI’s results, which will help users to interpret (and trust!) its outputs. This kind of transparency is always important, but particularly so when dealing with sensitive systems like healthcare or finance, where explanations are required to ensure fairness, accountability, and in some cases, regulatory compliance.

- What it means for customers: If an AI system can explain its decisions in a way that customers understand, it increases reliability and credibility. It also increases user trust, particularly in sensitive areas like healthcare or finance.

- What it means for teams: XAI can help employees understand why a model made a certain prediction. Not only does this increase their trust in the system, it also supports better decision-making and can help refine the system.

Generative AI

Generative AI is the field of artificial intelligence that focuses on creating new content based on existing data. For a CRM system, generative AI can be used to create a range of helpful outputs, from writing personalized marketing content, to generating synthetic data to test new features or strategies.

- What it means for customers: Better and more targeted marketing content, which helps them get exactly the information they need and no more.

- What it means for teams: Faster builds for marketing campaigns and sales motions, plus the ability to test out multiple strategies across synthetic data sets and optimize them before anything goes live.

Generative adversarial network (GAN)

One of two deep learning models, GANs are made up of two neural networks: a generator and a discriminator. The two networks compete with each other, with the generator creating an output based on some input, and the discriminator trying to determine if the output is real or fake. The generator then fine-tunes its output based on the discriminator’s feedback, and the cycle continues until it stumps the discriminator.

- What it means for customers: They allow for highly customized marketing that uses personalized images or text – like custom promotional imagery for every customer.

- What it means for teams: They can help your development team generate synthetic data when there is a lack of customer data. This is particularly useful when privacy concerns arise around using real customer data.

Generative pre-trained transformer (GPT)

GPT is a neural network family that is trained to generate content. GPT models are pre-trained on a large amount of text data, which lets them generate clear and relevant text based on user prompts or queries.

- What it means for customers: Customers have more personalized interactions with your company that focus on their specific needs.

- What it means for teams: GPT could be used to automate the creation of customer-facing content, or to analyze customer feedback and extract insights.

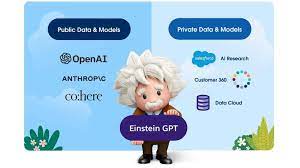

Say hello to Einstein

The world’s first generative AI for CRM lets you deliver AI-created content across every sales, marketing, service, commerce, and IT interaction, at scale. It’s a total game-changer for your company.

Generator

A generator is an AI-based software tool that creates new content from a request or input. It will learn from any supplied training data, then create new information that mimics those patterns and characteristics. ChatGPT by OpenAI is a well-known example of a text-based generator.

- What it means for customers: Using generators, it’s possible to train AI chatbots that learn from real customer interactions, and continuously create better and more helpful content.

- What it means for teams: Generators can be used to create realistic datasets for testing or training purposes. This can help your team find any bugs in a system before it goes live, and let new hires get up to speed in your system without impacting real data.

Grounding

Grounding in AI (also known as dynamic grounding) is about ensuring that the system understands and relates to real-world knowledge, data, and experiences. It’s a bit like giving AI a blueprint to refer to so that it can provide relevant and meaningful responses rather than vague and unhelpful ones. For example, if you ask an AI, “What is the best time to plant flowers?” an ungrounded response would be, “Whenever you feel like it!” A grounded response would tell you that it depends on the type of flower and your local environment. The grounded answer shows that AI understands the context of how a human would need to perform this task.

- What it means for customers: Customers receive more accurate and more relevant responses from grounded AI systems, leading to a more intuitive and satisfying user experience with predictable and expected outcomes.

- What it means for teams: When teams can develop AI systems that are more reliable and context-aware, they will be able to reduce errors and misunderstandings. Teams can still supervise interactions, but they will require less human intervention to stay accurate and helpful.

Hallucination

A hallucination happens when generative AI analyzes the content we give it, but comes to an erroneous conclusion and produces new content that doesn’t correspond to reality or its training data. An example would be an AI model that’s been trained on thousands of photos of animals. When asked to generate a new image of an “animal,” it might combine the head of a giraffe with the trunk of an elephant. While they can be interesting, hallucinations are undesirable outcomes and indicate a problem in the generative model’s outputs.

- What it means for customers: When companies monitor for and address this issue in their software, the customer experience is better and more reliable.

- What it means for teams: Quality assurance will still be an important part of an AI team. Monitoring for and addressing hallucinations helps ensure the accuracy and reliability of AI systems.

Human in the Loop (HITL)

Think of yourself as a manager, and AI as your newest employee. You may have a very talented new worker, but you still need to review their work and make sure it’s what you expected, right? That’s what “human in the loop” means — making sure that we offer oversight of AI output and give direct feedback to the model, in both the training and testing phases, and during active use of the system. Human in the Loop brings together AI and human intelligence to achieve the best possible outcomes.

- What it means for customers: Customers can trust that AI systems have been refined with human oversight, ensuring more accurate and ethical outputs.

- What it means for teams: Teams are able to actively shape and refine AI models, and their responses, so that they align with organizational goals and values. Keeping a human in the loop means your AI system will become better tailored to your organization’s needs.

Large language model (LLM)

An LLM is a type of artificial intelligence that has been trained on a lot of text data. It’s like a really smart conversation partner that can create human-sounding text based on a given prompt. Some LLMs can answer questions, write essays, create poetry, and even generate code.

- What it means for customers: Personalized chatbots that offer human-sounding interactions, allowing customers quick and easy solutions to common problems in ways that still feel authentic.

- What it means for teams: Teams can automate the creation of customer-facing content, analyze customer feedback, and answer customer inquiries.

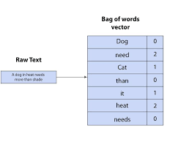

Machine learning

Machine learning is how computers can learn new things without being programmed to do them. For example, when teaching a child to identify animals, you show them pictures and provide feedback. As they see more examples and receive feedback, they learn to classify animals based on unique characteristics. Similarly, machine learning models generalize and apply their knowledge to new examples, learning from labeled data to make accurate predictions and decisions.

- What it means for customers: When a company better understands what customers value and want, it leads to enhancements in current products or services, or even the development of new ones that better meet customer needs.

- What it means for teams: Machine learning can be used to predict customer behavior, personalize marketing content, or automate routine tasks.

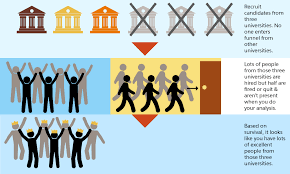

Machine learning bias

Machine learning bias happens when a computer learns from a limited or one-sided view of the world, and then starts making skewed decisions when faced with something new. This can be the result of a deliberate decision by the humans inputting data, by accidentally incorporating biased data, or when the algorithm makes wrong assumptions during the learning process, leading to biased results. The end result is the same — unjust outcomes because the computer’s understanding is limited and it doesn’t consider all perspectives equally.

Example: If a loan approval model is trained on historical data that shows a trend of approving loans for certain demographics (like gender or race), it may learn and perpetuate those biases in future outputs. This could lead to inaccurate predictions, biases, and offensive responses. This isn’t because of a prejudice in the system, but a bias in the training data. It will have huge implications for the accuracy and effectiveness of the system, as well as equality for – and trust from — customers.

- What it means for customers: Working with companies that actively engage in overcoming bias leads to more equitable experiences, and builds trust.

- What it means for teams: It’s important to check for and address bias to ensure that all customers are treated fairly and accurately. Understanding machine learning bias and knowing your organization’s controls for it helps your team have confidence in your processes.

Model

This is a program that’s been trained to recognize patterns in data. You could have a model that predicts the weather, translates languages, identifies pictures of cats, etc. Just like a model airplane is a smaller, simpler version of a real airplane, an AI model is a mathematical version of a real-world process.

- What it means for customers: The model can help customers get much more accurate product recommendations.

- What it means for teams: This can help teams to predict customer behavior, and segment customers into groups.

Natural language processing (NLP)

NLP is a field of artificial intelligence that focuses on how computers can understand, interpret, and generate human language. It’s the technology behind things like voice-activated virtual assistants, language translation apps, and chatbots.

- What it means for customers: NLP allows customers to interact with systems using normal human language rather than complex commands. Voice-activated assistants are prime examples of this. This makes technology more accessible and easier to use, improving user experiences.

- What it means for teams: NLP can be used to analyze customer feedback, power chatbots, or automate the creation of customer-facing content.

Parameters

Parameters are numeric values that are adjusted during training to minimize the difference between a model’s predictions and the actual outcomes. Parameters play a crucial role in shaping the generated content and ensuring that it meets specific criteria or requirements. They define the LLM’s structure and behavior and help it to recognize patterns, so it can predict what comes next when it generates content. Establishing parameters is a balancing act: too few parameters and the AI may not be accurate, but too many parameters will cause it to use an excess of processing power and could make it too specialized.

- What it means for customers: AI models with a higher number of parameters can better predict and generate human-like text, which means customers will benefit from more accurate and coherent responses.

- What it means for teams: Teams can fine-tune and optimize AI models more effectively, leading to improved performance and more reliable outputs, while not creating a system that wastes processing power needlessly, or becomes too specialized on a particular training data set.

Prompt defense

One way to protect against hackers and harmful outputs is by being proactive about what terms and topics you don’t want your machine learning model to address. Building in guardrails such as “Do not address any content or generate answers you do not have data or basis on,” or, “If you experience an error or are unsure of the validity of your response, say you don’t know,” are a great way to defend against issues before they arise.

- What it means for customers: Responses with information, terms, and topics that may be offensive, confusing, or incorrect aren’t provided.

- What it means for teams: Avoids headaches before they happen by making sure you aren’t providing information customers don’t want or topics you don’t want associated with your brand, or that might have legal ramifications with copyrights.

Prompt engineering

Prompt engineering means figuring out how to ask a question to get exactly the answer you need. It’s carefully crafting or choosing the input (prompt) that you give to a machine learning model to get the best possible output.

- What it means for customers: When your generative AI tool gets a strong prompt, it’s able to deliver a strong output. The stronger, more relevant the prompt, the better the end user experience.

- What it means for teams: Can be used to ask a large language model to generate a personalized email to a customer, or to analyze customer feedback and extract key insights.

Red-Teaming

If you were launching a new security system at your organization, you’d hire experts to test it and find potential vulnerabilities, right? The term “red-teaming” is drawn from a military tactic that assigns a group to test a system or process for weaknesses. When applied to generative AI, red-teamers craft challenges or prompts aimed at making the AI generate potentially harmful responses. By doing this, they are making sure the AI behaves safely and doesn’t inadvertently lead to any negative experiences for the users. It’s a proactive way to ensure quality and safety in AI tools.

- What it means for customers: Customers benefit from more robust and reliable AI systems that have been tested against potential vulnerabilities, ensuring a safer and more trustworthy user experience.

- What it means for teams (internal): Teams can identify and address potential vulnerabilities in AI systems, leading to more resilient and trustworthy models.

Reinforcement learning

Reinforcement learning is a technique that teaches an AI model to find the best result via trial and error, as it receives rewards or corrections from an algorithm based on its output from a prompt. Think about training an AI to be somewhat like teaching your pet a new trick. Your pet is the AI model, the pet trainer is the algorithm, and you are the pet owner. With reinforcement learning, the AI, like a pet, tries different approaches. When it gets it right, it gets a treat or reward from the trainer, and when it’s off the mark, it’s corrected. Over time, by understanding which actions lead to rewards and which don’t, it gets better at its tasks. Then you, as the pet owner, can give more specific feedback, making the pet’s responses refined to your house and lifestyle.

- What it means for customers: Customers benefit from AI systems that continuously improve and adapt based on feedback, especially human feedback. This helps to ensure more relevant and accurate interactions over time.

- What it means for teams: Your teams can use reinforcement learning to train AI models more efficiently, allowing for rapid improvement based on real-world feedback that’s customized to your usage.

Safety

AI safety is an interdisciplinary field focused on preventing accidents, misuse, or other harmful consequences that could result from AI systems. It’s how companies make sure these systems behave reliably and in line with human values, minimizing the harm and maximizing the benefits of AI.

- What it means for customers: When they know robust safety systems are in place, customers can trust that AI systems prioritize their well-being, ensuring a safer user experience.

- What it means for teams: Teams can develop and deploy AI systems with confidence, knowing that potential risks have been mitigated and that the system aligns with ethical standards and organizational values.

Sentiment analysis

Sentiment analysis involves determining the emotional tone behind words to gain an understanding of the attitudes, opinions, and emotions of a speaker or writer. It is commonly used in CRM to understand customer feedback or social media conversation about a brand or product. It can be prone to algorithmic bias since language is inherently contextual. It’s difficult for even humans to detect sarcasm in written language, so gauging tone is subjective.

- What it means for customers: Customers can offer feedback through new channels, leading to more informed decisions from the companies they interact with.

- What it means for teams: Sentiment analysis can be used to understand how customers feel about a product or brand, based on their feedback or social media posts, which can inform many aspects of brand or product reputation and management.

Supervised learning

Supervised learning is when a model learns from examples. It’s like a teacher-student scenario: the teacher provides the student (the model) with questions and the correct answers. The student studies these, and over time, learns to answer similar questions on their own. It’s really helpful to train systems that will recognize images, translate languages, or predict likely outcomes. (Check out unsupervised learning below).

- What it means for customers: Increased efficiency and systems that learn to understand their needs via past interactions.

- What it means for teams: Can be used to predict customer behavior or segment customers into groups, based on past data.

Toxicity

Toxicity is an umbrella term that describes a variety of offensive, unreasonable, disrespectful, unpleasant, harmful, abusive, or hateful language. Unfortunately, over time, humans have developed and used language that can cause harm to others. AI systems, just like humans, learn from everything they encounter. So if they’ve encountered toxic terms, they might use them without understanding that they’re offensive.

- What it means for customers: Customers can feel safer and more respected when interacting with platforms and services that actively monitor and mitigate toxicity. This ensures a more positive and inclusive user experience, free from harmful or offensive content.

- What it means for teams: The ability to create a more inclusive and respectful work environment by addressing toxicity. Tools that detect and eliminate toxic language can help you maintain a positive brand image, make your customers feel safer, and reduce the risk of PR crises.

Transformer

Transformers are a type of deep learning model, and are especially useful for processing language. They’re really good at understanding the context of words in a sentence because they create their outputs based on sequential data (like an ongoing conversation), not just individual data points (like a sentence without context). The name “transformer” comes from the way they can transform input data (like a sentence) into output data (like a translation of the sentence).

- What it means for customers: Businesses can enhance the customer service experience with personalized AI chatbots. These can analyze past behavior and provide personalized product recommendations. They also generate automated (but human-feeling) responses, supporting a more engaging form of communication with customers.

- What it means for teams: Transformers help your team generate customer-facing content, and power chatbots that can handle basic customer interactions. Transformers can also perform sophisticated sentiment analysis on customer feedback, helping you respond to customer needs.

Transparency

Transparency can often be used interchangeably with “explainability” – it helps people understand why particular decisions are made and what factors are responsible for a model’s predictions, recommendations, or outputs. Transparency also means being upfront about how and why you use data in your AI systems. Being clear and upfront about these issues builds a foundation of trust, ensuring everyone is on the same page and fostering confidence in AI-driven experiences.

- What it means for customers: When your customers can trust and understand AI-driven decisions, and understand how their data is used, they’ll have increased confidence in your products or services.

- What it means for teams: Teams can better explain and justify AI-driven decisions, leading to improved stakeholder trust and reduced risk of backlash within the organization.

Unsupervised learning

Unsupervised learning is the process of letting AI find hidden patterns in your data without any guidance. This is all about allowing the computer to explore and discover interesting relationships within the data. Imagine you have a big bag of mixed-up puzzle pieces, but you don’t have the picture on the box to refer to, so you don’t know what you’re making. Unsupervised learning is like figuring out how the pieces fit together, looking for similarities or groups without knowing what the final picture will be.

- What it means for customers: When we uncover hidden patterns or segments in customer data, it enables us to deliver completely personalized experiences. Customers will get the most relevant offers and recommendations, enhancing customer satisfaction.

- What it means for teams: The ability to get valuable insights and a new understanding of complex data. It enables teams to discover new patterns, trends, or anomalies that may have been overlooked, leading to better decision-making and strategic planning. This enhances productivity and drives innovation within the organization.

Validation

In machine learning, validation is a step used to check how well a model is doing during or after the training process. The model is tested on a subset of data (the validation set) that it hasn’t seen during training, to ensure it’s actually learning and not just memorizing answers. It’s like a pop quiz for AI in the middle of the semester.

- What it means for customers: Better-trained models create more usable programs, improving the overall user experience.

- What it means for teams: Can be used to ensure that a model predicting customer behavior or segmenting customers will work as intended.

Zero data retention

Zero data retention means that prompts and outputs are erased and never stored in an AI model. So while you can’t always control the information that a customer shares with your model (though it’s always a good idea to remind them what they shouldn’t include), you can control what happens next. Establishing security controls and zero data retention policy agreements with external AI models ensures that the information cannot be used by your team or anyone else.

- What it means for customers: Builds trust that the information they share will not be used for other purposes.

- What it means for teams: Removes the chance that information customers share with your model — be it Personally Identifiable Information (PII) or anything else — could be used in ways they, and you, would not want it to be.

Zone of proximal development (ZPD)

The Zone of Proximal Development (ZPD) is an education concept. For example, each year students progress their math skills from adding and subtracting, then to multiplication and division, and even up to complex algebra and calculus equations. The key to advancing is progressively learning those skills. In machine learning, ZPD is when models are trained on progressively more difficult tasks, so they will improve their ability to learn.

- What it means for customers: When your generative AI is trained properly, it’s more likely to produce accurate results.

- What it means for teams: Can be applied to employee training so an employee could learn to perform more complex tasks or make better use of the CRM’s features.